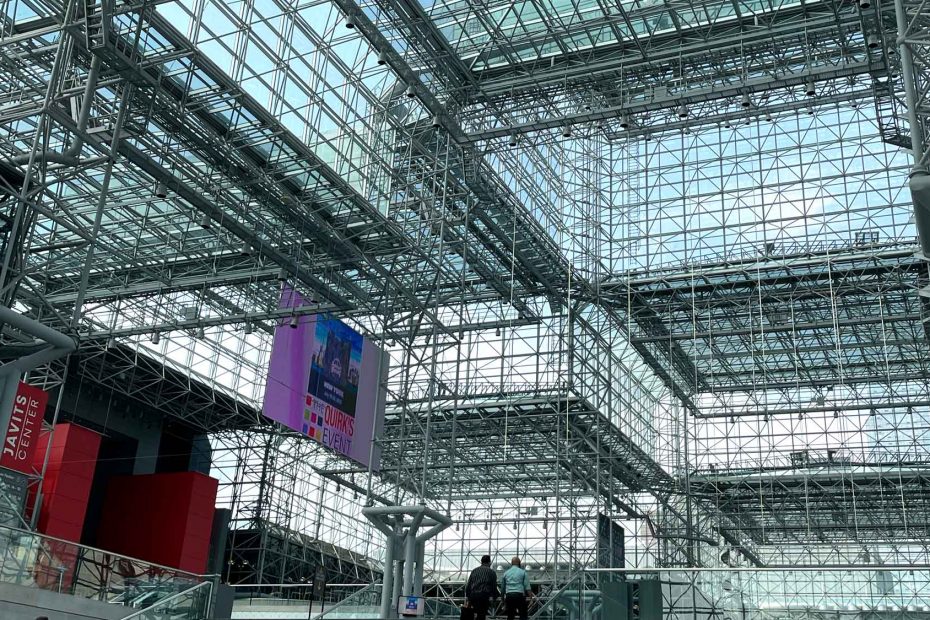

When I attended Quirk’s New York this year, which is one of the latest market research conferences, I tried to attend all the keynote presentations that had AI in their title.

It was nearly impossible. There were so many, that they overlapped. Still, I walked away from this conference with new insights into AI including its capabilities, how to protect data – and where humans fit into this rapidly evolving world.

Most of the presentations I attended were about Generative AI and more specifically, about ChatGPT. These seminars covered basic explanations of what the technology is, how to write effective prompts, its benefits such as helping researchers develop questionnaires, and the various threats it poses. On the topic of threats, data quality was one of the most prevalent concerns – citing chatbots impersonating real participants and compromising data validity. This data validity is crucial to a project: when there’s untrustworthy data, the project is dead on arrival. These presentations provided practical strategies to counter the risks, but audiences seemed focused on a slightly different set of questions. For example:

- where does AI fit in?

- should we really take the human out when we’re trying to be human-centric?

- how can I make sure my data doesn’t get out there (and comes back to bite me)?

I get what AI is, but I’m still asking myself, “so what?”

There is a possible explanation for the disconnect between the power of recent AI advancements, and experts’ ability to find clear ways to take advantage of it: AI is fundamentally a technical discipline, spearheaded by data scientists and software engineers. While it is easy to demonstrate how a chatbot is so fantastically powerful, demonstrating how AI can fit into a larger workflow isn’t as straightforward.

The metaphor of a solution looking for a problem comes to mind. While AI has shown incredible capability, the ability to mimic human reasoning alone isn’t enough to solve complex problems. Comparing AI to a human gives a handy example: although both AI and humans demonstrate critical thinking abilities—or mimicked abilities in the case of AI—the lack of subject matter expertise prevents them from relying on such ability as the only tool to solve problems.

Reasoning alone, natural or artificial, is only part of the equation. To be useful, reasoning must be framed in the context of an objective and complemented by other tasks in a larger workflow.

How AI makes us more—not less—human-centric

The second question—taking the human out of the process—can be interpreted in two ways: replacing participants with artificial sources of opinion, and taking the analyst out of the analysis process. Replacing participants is, in my view, a terrible idea. A connecting theme across presentations was the increased need for authenticity and empathy in a changing world. How can we be more empathetic to people when we are trying to understand them by proxy?

In the case of taking the analyst out of the analysis process, I believe this is a misconception. Rather than taking the human out, AI is about empowering analysts to be even more human. In my experience, when analysts work with large amounts of data, they face two challenges: first, people are known to make mistakes when they’re overwhelmed by information overload; and second, they are likely to miss important signals because of the amount of noise.

These challenges result in mistakes, and missing strategy-altering insights. When AI is seen as a tool rather than as a replacement, we can start parsing through the various steps in a given workflow and seeing where AI can empower workers.

For example, AI is especially effective at identifying patterns buried in large amounts of information. This ability to find patterns makes AI an excellent option to organize data, identify and discard noise, and thus let insights emerge. Sparing humans from this type of filtering work enables them to look at distilled data with their mental bandwidth intact. Therefore, humans aren’t taken out of the flow but rather given the tools to exercise more—not less—of the type of thinking that separates them from even the smartest of the AI systems out there.

How to deploy AI responsibly

The third question is all about using AI inside an organization’s own environment. A major concern brought up during the conference was that data, if leaked, can be used to mimic original works – enabling malicious actors to create fake messaging that appears authentic. This was particularly worrisome to companies that work hard to keep messaging true to their ethos. The last thing they want is to have their communications hijacked to tell a story that misleads consumers, exposes their competitive strategy, and ultimately harms the brand.

From the agencies’ perspective, the concern of data leaks was related to their ability to protect the data that their clients have trusted them with. In both of these cases, it was surprising to me that none of the presentations I attended discussed the availability of alternatives to OpenAI that can be implemented within organizations’ own technical environments, or “firewalls.” For example, at the time of this publication, two GPT competing large language models—Llama 2 and Falcon—can be used inside an organization’s firewall, just like all other systems that contain sensitive information today.

As the founder and CEO of a company focused on the AI engineering, I found this conference enlightening. These types of events bring together experts from different sides of the ecosystem, and ultimately help those of us working in AI understand what people really want from it.